Signal and Noise: Inside the Lab Where Paralyzed Patients Are Learning to Type With Their Minds

The first thing you notice is the silence. Not the comfortable silence of a library, but the held-breath kind—the kind that settles over a room when something fragile and extraordinary is being attempted. In a temperature-controlled suite at a neural engineering research facility, a man named Marcus sits in a padded chair, electrodes threaded through a port in his skull, and stares at a cursor blinking on a screen. He has not moved his hands in four years. Yet the cursor is moving. Letter by letter, a sentence is assembling itself from pure intention, as if his thoughts have learned to reach through the air and touch the world again.

The Lab as Frontier Post

Brain-computer interface research has long occupied a strange liminal space between science fiction and clinical reality—promising everything, delivering incrementally, and generating headlines that oscillate between breathless optimism and pointed skepticism. But walk into one of these facilities today, and the gap between promise and reality feels narrower than it has ever been. The equipment has changed. The software has changed. Most importantly, the patients have changed: they are no longer passive subjects enduring experimental procedures for the sake of data. They are active collaborators, debuggers, and in some cases, advocates pushing the science forward with the urgency of people who have everything to gain.

Marcus, who asked that his last name not be used, sustained a cervical spinal cord injury in a construction accident. He enrolled in a clinical trial after watching a video of an earlier participant composing an email using only neural signals. "I thought it was a trick," he says, his voice synthesizer translating the words he types on the interface into speech. "Then I thought, if it is real, I want in."

The Signal Problem Nobody Talks About

The engineering challenge at the heart of BCI research is one that rarely gets adequate attention in the popular press: the signal degrades. Electrodes implanted in neural tissue trigger an immune response, and over weeks or months, scar tissue accumulates around the recording sites, muffling the electrical chatter of nearby neurons like a hand pressed over a speaker. Early implants lost meaningful signal within a year. This biological rejection problem has been the quiet nemesis of every promising trial, the reason BCI research has historically felt like a perpetual dress rehearsal.

What is different now is the computational layer sitting between the electrode and the output. Machine learning models trained on neural data have become astonishingly good at inferring intent even from degraded, noisy signals. Where a 2015-era decoder might have needed clean, high-amplitude spikes to distinguish a leftward thought from a rightward one, modern algorithms can extract directional information from what looks, to an untrained eye, like static. "We are essentially doing archaeology," explains one neural engineer who has spent a decade working on decoding software. "The signal is buried under noise. We are learning to read the shards."

This computational resilience has extended the functional lifespan of implants and, more importantly, has opened a path toward less invasive recording methods. Researchers are now demonstrating that signals captured from the surface of the brain, or even from electrodes threaded through blood vessels into proximity with neural tissue, can yield sufficient information for meaningful control. The era of drilling through the skull as the only viable approach may be ending.

Where Neuralink Fits Into the Picture

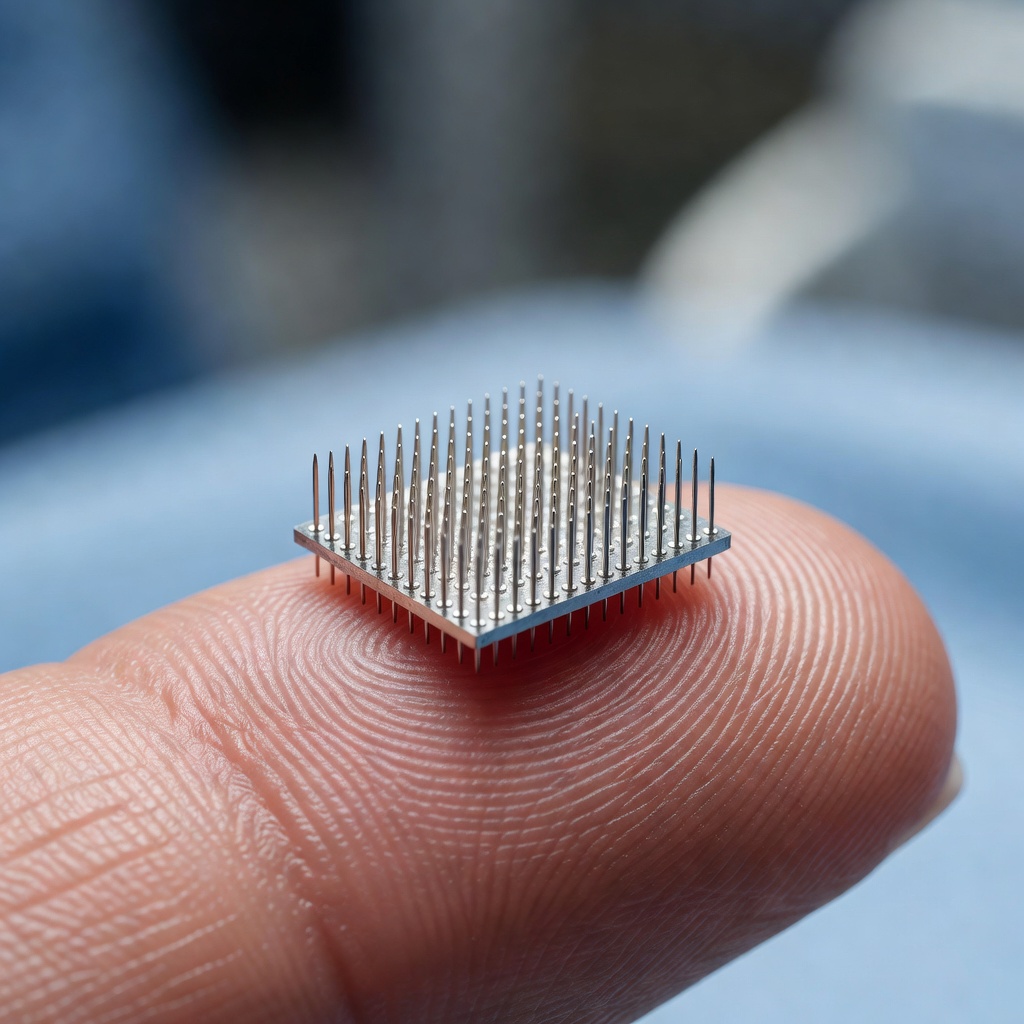

No conversation about brain-computer interfaces in 2024 and 2025 can avoid Neuralink, Elon Musk's neurotechnology company, which achieved a milestone in early 2024 when it implanted its N1 chip in a human patient for the first time. That patient, Noland Arbaugh, subsequently demonstrated on a live stream that he could control a computer cursor and play chess using neural signals alone. The moment was simultaneously a genuine scientific achievement and a masterclass in Musk-style product theater—but the underlying technology was real.

Neuralink's approach differs from legacy BCI systems in several important ways. The implant is placed by a robotic surgical system designed to avoid blood vessels with a precision no human surgeon could reliably match, reducing the inflammatory cascade that causes signal degradation. The chip itself sits flush with the skull, connected wirelessly to external processors, eliminating the transcutaneous cables that have historically been infection vectors in other systems. And the electrode count, currently in the thousands per implant, dwarfs what most academic labs have worked with.

Musk has described Neuralink's long-term ambition not merely as a medical device company but as the foundational infrastructure for what he calls "human-AI symbiosis"—the idea that as artificial intelligence becomes more capable, humans will need a high-bandwidth neural interface to remain meaningful participants in a world increasingly shaped by machine cognition. Whether one finds this vision inspiring or alarming tends to depend heavily on one's priors about both AI trajectories and corporate motivations. What is harder to dispute is that the engineering is advancing.

Beyond Restoration: The Augmentation Horizon

The clinical framing of BCI research, focused on restoring lost function to people with paralysis or degenerative disease, commands the most immediate moral consensus. Helping a locked-in patient communicate is difficult to argue against. But the technology does not respect the line between restoration and enhancement, and the field is beginning to reckon seriously with what comes next.

Researchers at several institutions are exploring BCI applications that have nothing to do with disability. Memory augmentation, attention modulation, accelerated skill acquisition, and real-time emotional regulation are all active areas of inquiry. DARPA has funded programs specifically aimed at enhancing the cognitive performance of healthy soldiers. Consumer neurostimulation devices are already available over the counter, promising focus and relaxation through electrical stimulation of the scalp, though their effects remain contested.

The trajectory is clear even if the timeline is not: the same neural interface that helps Marcus type today could, in a more mature form, allow a healthy user to interact with digital systems at speeds that keyboards and touchscreens cannot approach. The question of who gets access to that capability, and what it means for social stratification, is one that neuroscientists, engineers, and policymakers are only beginning to ask in earnest.

The Patient's Perspective

Back in the lab, Marcus has finished composing his sentence. It took him about ninety seconds, which is roughly half the time it took him at his first session six months ago. The neural decoder has been learning his patterns, and he has been learning to generate cleaner, more consistent signals. It is a collaboration, not a command. "People ask me if it feels weird," he says, the cursor sliding to each letter with practiced ease. "It feels like remembering how to talk. Like the words were always there and something was just blocking them."

That description, offered by a man sitting at the raw frontier of one of the most consequential technological developments of our time, captures something that no engineering specification can: the human dimension of this work. BCI research is not ultimately about electrodes or decoding algorithms or wireless bandwidth. It is about the gap between a person and their own agency, and the extraordinary lengths that science is going to in order to close it.

What the Next Decade Holds

The pace of progress across the field suggests that the 2030s will look radically different from the present. Flexible, biodegradable electrodes that dissolve after delivering their data are in development. Neural dust, arrays of microscopic wireless sensors distributed through brain tissue, has been demonstrated in animal models. Non-invasive systems using ultrasound to read neural activity are moving from proof-of-concept to prototype. The convergence of these hardware advances with increasingly powerful AI decoders is likely to produce systems capable of capturing and interpreting neural signals with a fidelity that current methods cannot approach.

Whether those systems remain medical devices or become consumer products, whether they are regulated with appropriate rigor or outpaced by commercial ambition, and whether the benefits they offer are distributed equitably or reserved for the affluent: these are not engineering questions. They are choices. And unlike the signals flickering through Marcus's implant, they have not yet been decoded.