Steel Hands, Living Minds: How Tesla's Optimus and the Physical AI Revolution Are Rewriting What Robots Can Do

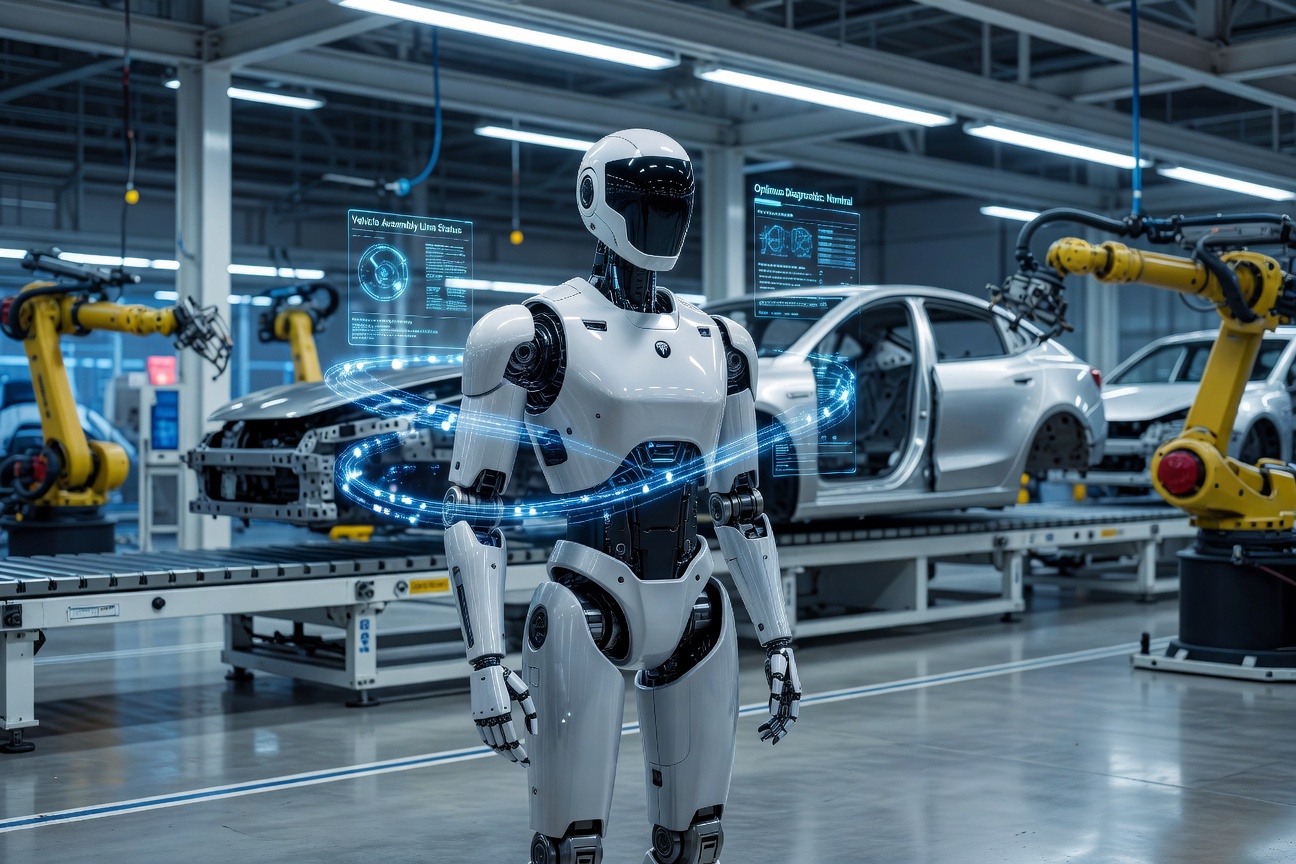

There is a quiet revolution unfolding on factory floors, in university robotics labs, and inside the gleaming corridors of Tesla's Gigafactories, and it moves at roughly the same walking speed as a human being. Humanoid robots, long the province of science fiction and expensive academic demonstrations, are crossing a threshold that engineers have chased for decades: they are becoming genuinely, practically useful. At the center of this transformation sits Tesla's Optimus program, a project that has matured with startling speed from a man in a robot suit walking across a stage in 2021 to a dexterous, AI-powered machine sorting battery cells and manipulating components with a precision that surprises even seasoned roboticists.

From Party Trick to Production Floor

The trajectory of Optimus Gen 2 tells a story not just about one company's ambitions, but about a broader inflection point in what engineers call physical AI, the discipline of embedding sophisticated machine intelligence into bodies that must navigate, touch, lift, and interact with a messy, unpredictable physical world. Unlike the clean sandbox of a language model predicting the next token, a humanoid robot must predict the next moment: the slight give of a cable harness, the precise torque needed to seat a bolt, the split-second balance correction when a conveyor belt judders unexpectedly.

Tesla's engineering teams have leaned hard into a philosophy that mirrors the company's approach to autonomous vehicles: train on massive real-world data, iterate relentlessly, and refuse to treat safety and capability as mutually exclusive. Optimus is now trained using a variant of the same neural network architecture that powers Tesla's Full Self-Driving suite, which means it benefits from one of the largest fleets of real-world perception data ever assembled. The robot does not merely execute pre-programmed movements; it infers, adapts, and recovers.

Dexterity: The Final Frontier of Robotics

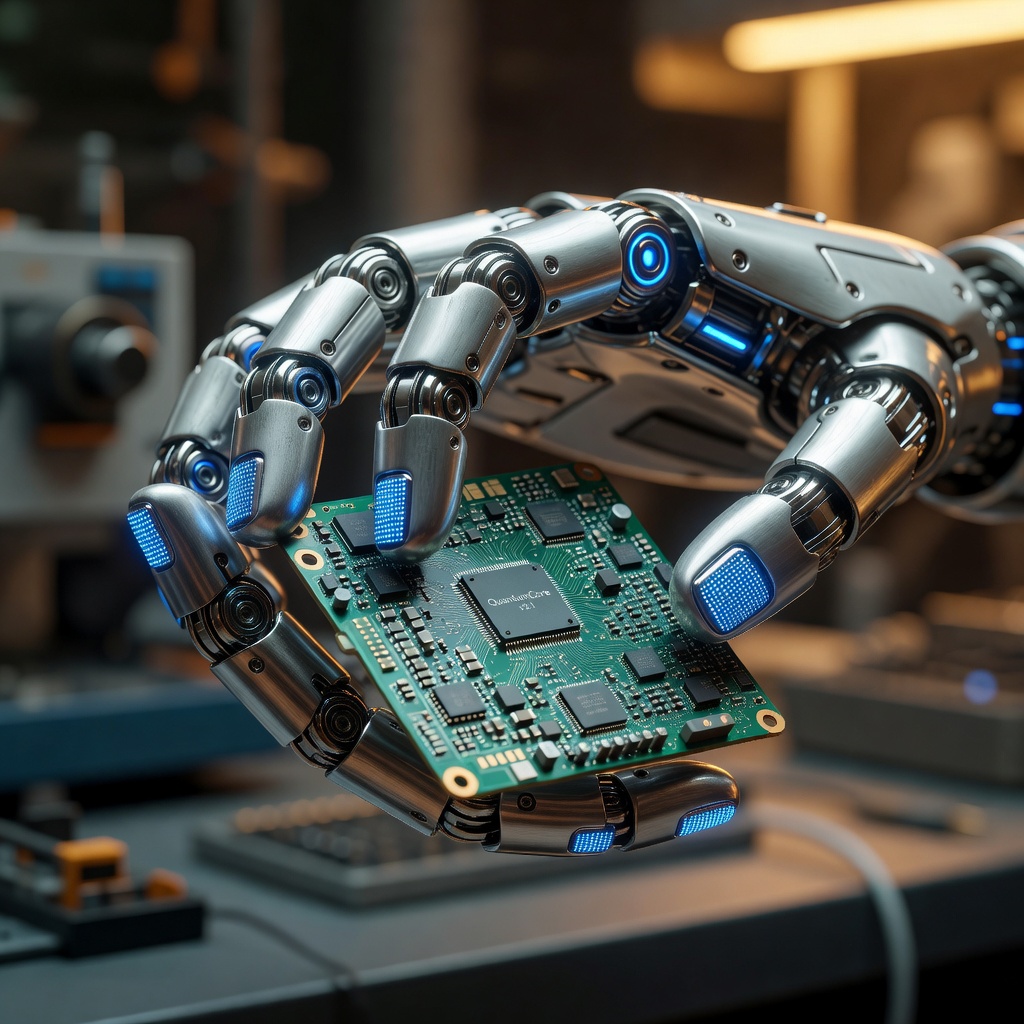

If locomotion was the first mountain humanoid robotics had to climb, dexterity is the Everest that has humbled generations of engineers. Human hands contain 27 bones, more than 30 muscles, and a nervous system so finely tuned that we can feel the difference between silk and cotton by brushing a fingertip across the surface. Replicating even a fraction of that capability in an electromechanical system has historically required hardware so expensive and fragile it could only survive in controlled laboratory conditions.

Tesla's answer has been a combination of custom actuators, tactile sensors embedded in fingertips, and a training regime that draws heavily on teleoperation data, recordings of human operators physically demonstrating tasks while wearing sensor-laden gloves. The resulting dataset teaches the robot not just what to do, but how to feel when it is doing it correctly. Elon Musk has described this feedback loop as giving the robot a sense of touch memory, an ability to build up an intuition for physical interaction that compounds over time the way a craftsperson's skill deepens with practice.

The results are visible in footage released by Tesla showing Optimus handling eggs without cracking them, threading components onto circuit boards, and performing yoga-style balance exercises that would have seemed implausible for a machine of its size just two years ago. These are not cherry-picked miracles; they are demonstrations of a system that has generalized across task types, which is the critical distinction between a robot that can do one thing well and one that can do many things adequately, and steadily improve at all of them.

The Ecosystem Flowering Around Optimus

Tesla is not alone in this race, and that is arguably the most exciting development of all. A rich ecosystem of startups and established players, each attacking the physical AI problem from a different angle, is producing a Cambrian explosion of approaches that cross-pollinate in unexpected ways. Figure AI, Agility Robotics, 1X Technologies, and Boston Dynamics are all advancing their own humanoid platforms, and the competition is functioning precisely as competition should: it is accelerating everyone.

What unites the most promising of these programs is a shared conviction about data. The companies making the fastest progress are those that have found ways to generate enormous quantities of robot interaction data cheaply and at scale, whether through simulation environments that mirror the physics of the real world with high fidelity, through teleoperation campaigns that harvest human skill, or through the kind of fleet-scale deployment that Tesla uniquely can achieve by putting robots to work inside its own manufacturing ecosystem and learning from every hour of operation.

Why the Factory Floor Is the Perfect Classroom

There is a strategic genius embedded in Tesla's decision to deploy Optimus inside its own Gigafactories before selling it to anyone else. Manufacturing environments are simultaneously demanding enough to provide rich training signal and controlled enough to allow safe iteration. Every time an Optimus unit struggles with a new component geometry, slips on a wet floor, or misjudges the weight of a subassembly, that failure gets logged, analyzed, and used to improve the next software update pushed across the fleet. The factory is not just a place where robots work; it is the world's most sophisticated robotics training ground.

This approach also addresses the chicken-and-egg problem that has plagued robotics commercialization for years. Customers are reluctant to buy robots that have not been proven at scale; robots cannot be proven at scale without customers willing to absorb early-adoption friction. By being its own first customer, Tesla sidesteps that impasse entirely. By the time Optimus is offered externally, it will already have accumulated tens of thousands of operational hours across a diverse range of real manufacturing tasks.

The $20,000 Question: Can Humanoid Robots Actually Be Affordable?

Musk has stated publicly that Tesla's long-term target for Optimus pricing is somewhere in the range of $20,000 to $25,000 per unit, a figure that initially sounded like aspirational fantasy. Most humanoid robots today cost several times that amount and require expensive ongoing maintenance contracts. But the calculation changes when you consider Tesla's manufacturing pedigree. The company has spent fifteen years relentlessly engineering cost out of complex electromechanical systems, from battery packs to drive units to power electronics. The same supply chain expertise, the same vertical integration philosophy, the same obsessive focus on design-for-manufacturability that brought the cost of electric vehicle batteries down by more than 90 percent over a decade is now being applied to robotics.

The economic implications are staggering in the most optimistic sense. A $20,000 humanoid robot capable of performing a broad range of physical tasks for 16 or more hours per day represents a labor cost per hour that would transform the economics of industries from logistics and agriculture to elder care and construction. This is not about replacing human workers as an end in itself; it is about augmenting human capacity in ways that address genuine scarcities, the shortage of people willing to do dangerous or repetitive tasks, the demographic crunch facing advanced economies with aging populations, the chronic undersupply of skilled tradespeople in the construction sector.

Physical AI as Infrastructure

Perhaps the most generative way to think about what Tesla and the broader physical AI industry are building is as infrastructure, not products. Just as the internet created a platform on which an unimaginable diversity of applications were built by millions of developers who had nothing to do with the original network architecture, a fleet of capable, affordable, programmable humanoid robots creates a substrate on which entirely new categories of economic activity become possible.

Small businesses that could never afford specialized automation equipment could subscribe to robot-as-a-service models. Disaster response organizations could deploy humanoid platforms into environments too dangerous for human responders. Researchers in biology, chemistry, and materials science could run physical experiments at a speed and scale currently impossible without armies of laboratory technicians. The compounding effects of broadly accessible physical AI may rival those of broadly accessible digital AI, and the two will not be separate phenomena for long. The robots being built today are already deeply integrated with large language models, vision transformers, and the full stack of modern AI. They are, in a very real sense, AI that has grown a body.

The steel hands are here. The living minds powering them are growing sharper by the day. And the world those two facts will build together is only beginning to come into focus.