Inside the Factory That Teaches Robots to Feel: Tesla's Optimus and the Dawn of Embodied Intelligence

The first thing you notice is the hum. Not the mechanical clatter you might expect from a room full of industrial arms and conveyor belts, but something subtler—a low, almost respiratory rhythm that pulses through Tesla's advanced manufacturing bay as a row of Optimus Gen 2 robots moves through their assigned tasks. Their torsos sway with a faint, organic quality. Their fingers—each one independently actuated, wrapped in a tactile sensor mesh that can detect pressure gradients finer than a human fingernail—hover over battery cell trays with a precision that borders on the ceremonial. This is not a demonstration. There are no PR handlers. The robots are simply working, and the work is genuinely difficult.

The Body as the Classroom

For most of the past decade, the dominant assumption in AI research was that intelligence lived in the cloud—that if you fed a model enough data and gave it enough compute, it would eventually generalize to any problem you threw at it. The physical world was treated as an afterthought, a place where digital brilliance would eventually trickle down. Tesla, under Elon Musk's relentless pressure to compress timelines, is betting that assumption is exactly backwards. The body, in Tesla's emerging philosophy, is not a delivery mechanism for a brain. It is the curriculum.

This is the concept that researchers now call physical AI—or embodied intelligence—and it represents one of the most significant pivots in the history of robotics. Rather than training a model to simulate physical tasks in a virtual environment and then attempting to transfer that knowledge to hardware, Tesla's approach inverts the pipeline. Optimus units are deployed directly into live manufacturing environments, accumulating real sensorimotor experience. Every grip, every course-correction, every moment a robot pauses to re-examine an object it cannot immediately classify—all of it flows back into a continuously updated neural network that, over time, is building something closer to genuine physical intuition than any simulation has yet produced.

Fingers That Think

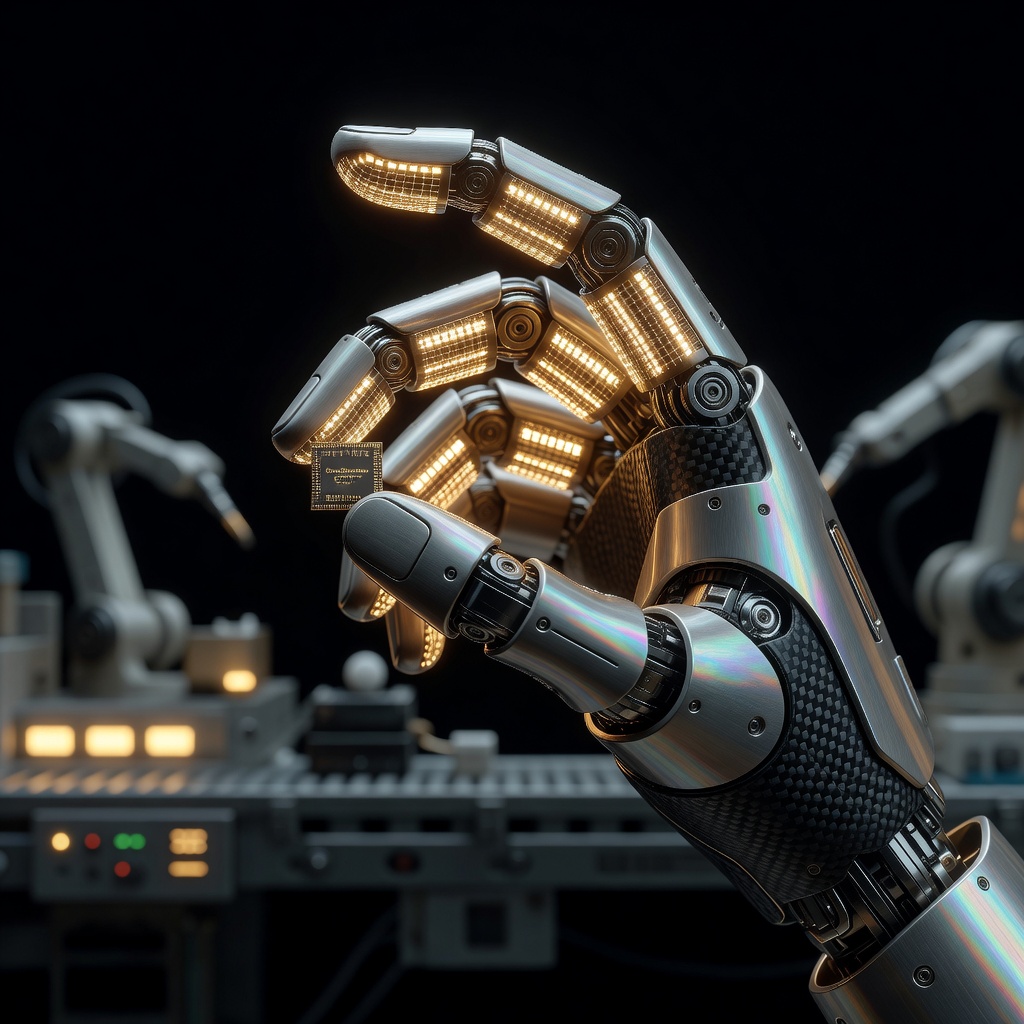

The hand is where the philosophy becomes concrete. Tesla's engineering team spent the better part of two years on Optimus's end effectors alone, and the obsession shows. Each finger contains a nested arrangement of actuators and strain gauges capable of exerting calibrated force across a range that spans from cradling a raw egg to tightening a bolt to spec. But the hardware is only half the story. Paired with a vision system that fuses data from multiple onboard cameras into a unified three-dimensional model of the robot's immediate environment, the hand effectively becomes a sensory organ with its own feedback loop.

What this means in practice is that Optimus does not simply execute pre-programmed motions. It reads the objects it encounters. A cable harness that has shifted two centimeters out of position is not a failure condition—it is data. The robot adjusts, logs the deviation, and the adjustment itself becomes training material for every other Optimus unit connected to Tesla's shared neural backbone. The fleet learns as a collective. Individual units contribute experience; the network compounds it. Musk has described this architecture as analogous to how a hive organism processes environmental feedback, though Tesla's engineers tend to reach for less dramatic metaphors when pressed.

The Simulation Gap, Closed by Sweat

One of the persistent criticisms leveled at humanoid robotics programs—including Tesla's—is the simulation-to-reality gap, the frustrating tendency for behavior that looks flawless in a physics engine to collapse the moment it encounters the irreducible messiness of the physical world. Dust, vibration, inconsistent lighting, materials that deform in ways no model perfectly predicted—reality has a bias for humbling overconfident algorithms.

Tesla's answer to this problem is not more sophisticated simulation. It is more reality. By deploying Optimus units into actual production environments early—accepting that the robots will be slower, less reliable, and more supervision-intensive than a seasoned human worker at first—the company is deliberately front-loading the friction. The data generated by those early stumbles is, in Tesla's calculation, worth more than years of simulated perfection. It is a philosophy that mirrors what Tesla did with its autonomous driving program: ship imperfect capability, harvest real-world edge cases at scale, iterate faster than any competitor running closed-loop tests on a proving ground.

The results, by internal accounts, have been striking. Task completion rates for Optimus units assigned to battery cell sorting and wire harness installation have reportedly climbed sharply over successive software generations, with error rates dropping not because the hardware changed but because the neural model grew denser with authentic experience. The robots are not being reprogrammed. They are, in a meaningful sense, learning on the job.

Musk's Longer Bet

Elon Musk has never been shy about framing the Optimus program in civilizational terms. His public projections—millions of units, labor transformed, scarcity itself disrupted—tend to attract both breathless enthusiasm and reflexive skepticism in roughly equal measure. Strip away the hyperbole, though, and the underlying strategic logic is coherent and worth taking seriously.

Tesla is simultaneously one of the world's largest manufacturers and one of its most data-hungry AI organizations. The factory floor is not just a place where cars are built—it is a sensor array of almost incomprehensible richness, generating continuous streams of information about how physical processes succeed and fail. Deploying humanoid robots into that environment is not simply a labor arbitrage play. It is a way of building a training dataset for general-purpose physical AI that no competitor can replicate from a standing start. The manufacturing context provides the data. The data improves the model. The improved model makes the robots more capable. More capable robots expand the contexts in which they can operate—and so the loop accelerates.

If Tesla can carry this flywheel through its early, expensive, failure-prone rotations and reach the point where Optimus units can operate with genuine autonomy across a broad range of unstructured physical tasks, the addressable market is not the automotive sector. It is every sector that depends on human physical labor—which is to say, most of the economy.

The Human Texture of the Question

Back on the Fremont floor, a human technician pauses beside one of the Optimus units, watching it work through a wire routing sequence that has apparently triggered an unfamiliar configuration. The robot slows, its cameras scanning, its fingers tentative. After a few seconds it finds its path and proceeds. The technician nods—almost imperceptibly, the way you might nod at a colleague who worked through a tricky problem without asking for help.

It is a small moment, easy to overlook. But it captures something that benchmark scores and investment decks consistently fail to convey: the actual texture of what is being attempted here. This is not a machine executing code. It is a system—hardware, software, accumulated experience, and an evolving capacity for contextual judgment—that is becoming something genuinely new. Not human. Not the robotic fantasies of science fiction. Something that has no adequate precedent and therefore no adequate name.

Physical AI, embodied intelligence, general-purpose robotics—all the labels feel slightly too small for what is beginning to emerge from those humming bays in Fremont. Whatever it becomes, the factory floor is where it is being born, one careful, uncertain, increasingly confident grip at a time.