The Last Upgrade: When Brain-Computer Interfaces Stop Being Medicine and Start Being You

Picture the year 2038. A structural engineer in Rotterdam sits down to review load-stress simulations for a hydrogen-powered bridge spanning the North Sea. She does not open a laptop. She does not type a query. She thinks the question, and within 200 milliseconds, a cascade of finite-element analysis data resolves into her visual field like a second layer of reality painted directly onto her perception. Her brain-computer interface has not merely assisted her. It has merged with her professional identity so completely that colleagues who lack one are quietly steered toward administrative roles. The upgrade, once a medical device reserved for quadriplegic patients, has become a career credential.

This is not science fiction. It is a reasonably conservative extrapolation of the trajectory that neural interface technology is currently traveling, and the institutions, industries, and philosophies that govern human life are almost entirely unprepared for its arrival.

From Restoration to Reinvention

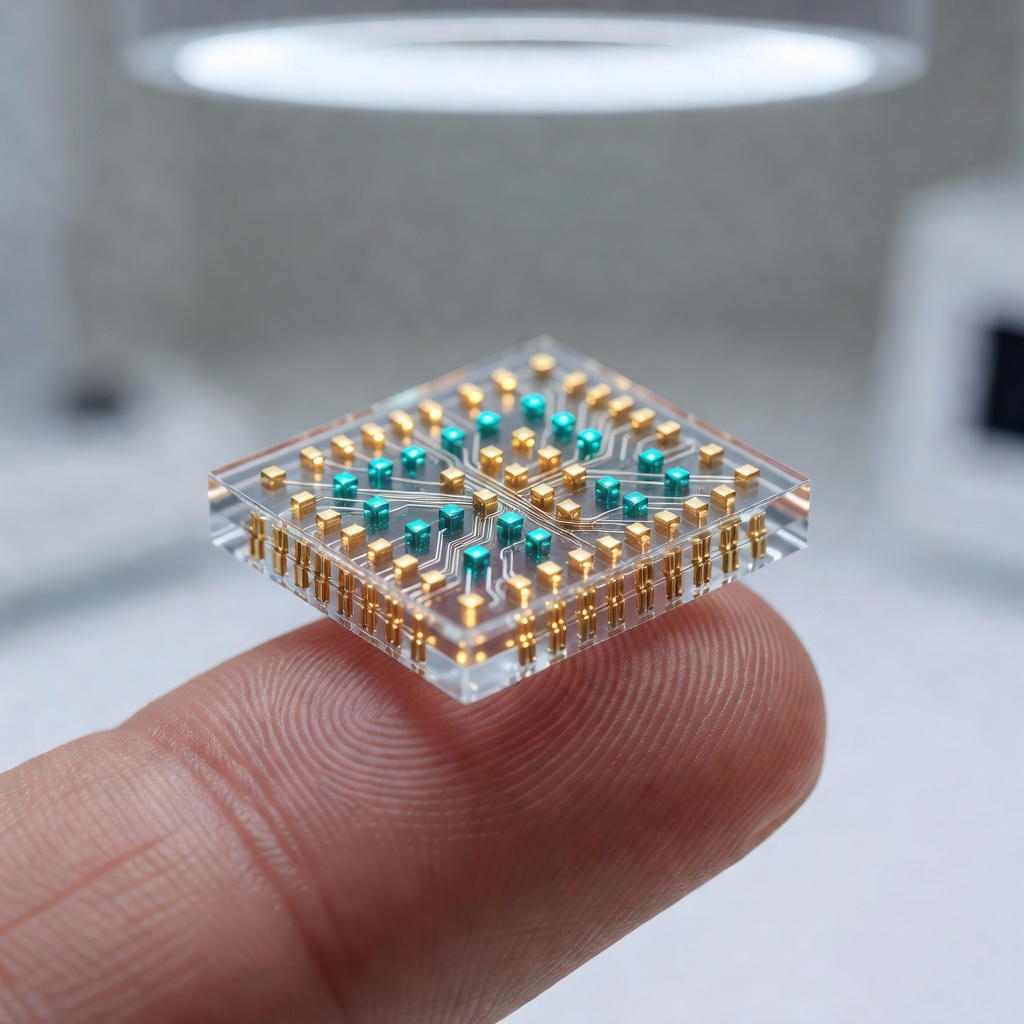

The current generation of brain-computer interfaces, including Neuralink's N1 chip and competing platforms from Synchron, Precision Neuroscience, and a growing cluster of university spinouts, are framed almost universally in therapeutic language. They restore lost motor function. They decode paralyzed patients' intent to communicate. They offer a lifeline to people locked inside unresponsive bodies. That framing is both accurate and strategically useful, because it threads the needle of regulatory approval while generating the kind of human-interest stories that fund the next research cycle.

But the architecture of these devices does not intrinsically limit them to restoration. A chip that can read motor cortex signals to move a cursor can, with software updates and expanded electrode arrays, begin reading prefrontal activity associated with planning, working memory, and abstract reasoning. The hardware roadmap that Neuralink and its peers are pursuing points inexorably toward higher-bandwidth, lower-latency connections between biological neurons and external compute infrastructure. Elon Musk has stated publicly that Neuralink's long-term ambition is to achieve a "tertiary layer" of human cognition sitting above the limbic and cortical systems, capable of interfacing directly with artificial intelligence at machine speed. The therapeutic era is the prologue. The augmentation era is the main event.

The Workplace Will Never Look the Same

Consider what happens to knowledge work when some practitioners can access, synthesize, and apply information at speeds that non-augmented colleagues simply cannot match. Economists studying the introduction of smartphones into professional environments documented measurable productivity divergences within three to five years of widespread adoption. A brain-computer interface is not a smartphone. It does not sit in a pocket. It sits inside the skull, operating continuously, learning the user's cognitive patterns, and increasingly anticipating needs before they are consciously articulated.

The labor market implications are staggering. Fields that currently prize raw cognitive throughput, including finance, software architecture, medicine, law, and engineering, would likely experience a bifurcation between augmented and non-augmented practitioners within a decade of consumer-grade interfaces reaching market. This is not a distant concern. Neuralink's stated goal is to bring the cost of implantation down to that of LASIK eye surgery, which currently runs between two and four thousand dollars in the United States. At that price point, augmentation becomes accessible to upper-middle-class professionals within the first product generation, and pressure cascades downward through the workforce with the same logic that made smartphones non-optional for most white-collar jobs by 2015.

The more unsettling question is not whether augmented workers will outperform non-augmented ones. They almost certainly will. The question is whether employers will be permitted to prefer them, whether insurers will price premiums around cognitive enhancement status, and whether educational systems designed around the assumption of a standardized biological cognitive baseline will simply collapse under the weight of their own irrelevance.

Education at the Edge of Obsolescence

The university system as currently constructed is, at its core, a bandwidth problem. Human beings can only absorb, retain, and apply new information so quickly. Curricula are paced to that biological constraint. Degrees take four years because four years is roughly how long it takes a standard human brain to move from foundational literacy in a discipline to functional professional competence.

A high-bandwidth neural interface connected to curated knowledge repositories and AI tutoring systems would not eliminate the need for deep learning, but it would radically compress the timeline for surface-level competency acquisition. Medical students currently spend two years memorizing anatomical and pharmacological data before touching a patient. An augmented student with real-time recall augmentation and AI-assisted diagnostic overlays could reasonably begin supervised clinical practice far earlier, reserving institutional education for the judgment, ethics, and pattern recognition that resist digitization.

Universities that adapt will pivot toward teaching wisdom rather than information. Those that do not will find themselves in the business of issuing credentials for knowledge that graduates can now access on demand, which is a business model with a very short runway.

Identity, Continuity, and the Self That Gets Upgraded

Perhaps the most underexplored dimension of the coming augmentation era is the question of personal identity under conditions of continuous cognitive modification. Philosophers have long wrestled with the Ship of Theseus problem, the puzzle of whether an object that has had all its components replaced remains the same object. Brain-computer interfaces introduce a neurological variant of this paradox.

If an interface gradually reshapes your memory consolidation patterns, augments your emotional regulation, accelerates your processing speed, and begins feeding you synthesized insights faster than your biological reasoning can independently generate them, are you still the person who received the implant? At what point does the augmented self diverge meaningfully from the biological baseline? These are not merely philosophical puzzles. They are questions with direct legal, psychological, and social consequences. Courts will eventually need to determine whether a decision made in a state of neural augmentation carries the same legal weight as one made without it. Therapists will need frameworks for treating identity disorders that arise not from trauma but from software updates.

Musk himself has gestured at this tension, suggesting that the symbiosis between human cognition and AI is the only viable path to preserving meaningful human agency in a world where artificial general intelligence becomes dominant. The augmented human, in this framing, is not a diminished human. It is a human that survives. But survival through transformation raises its own profound questions about what, exactly, is being preserved.

The Infrastructure of Thought

There is one final dimension that deserves serious attention from technologists and policymakers alike: the infrastructure layer that brain-computer interfaces will require. High-bandwidth neural interfaces that communicate with external AI systems are not standalone devices. They are endpoints on a network. That network requires servers, power, cybersecurity architecture, and corporate governance. It also creates a data layer of unprecedented intimacy. The information generated by a continuous neural interface includes not just commands and queries but the raw material of cognition: attention patterns, emotional states, decision latencies, and the subtle signatures of thought before it reaches conscious articulation.

Who owns that data? Who secures it? What happens when the company maintaining your cognitive infrastructure is acquired, goes bankrupt, or is compelled by a government to produce records? These are the questions that will define the politics of the augmentation era as surely as bandwidth and electrode counts define its technology. The race to wire humanity's most intimate organ into a commercial network is accelerating. The regulatory and philosophical frameworks needed to govern that race are not. Somewhere in that gap lives the most consequential story of the next thirty years.

"The augmented human is not a diminished human. It is a human that survives. But survival through transformation raises its own profound questions about what, exactly, is being preserved."

The last upgrade, whenever it arrives, will not announce itself with a press release. It will arrive quietly, one electrode at a time, until the question of what it means to think for yourself requires a entirely new answer.