Can a Robot Truly Understand the World? The Scientific Debate Tearing Physical AI Apart

Picture a chess grandmaster who has never once felt the satisfying weight of a rook in their fingers, never experienced the anxiety of a ticking clock overhead, never sat across a human opponent whose breathing changes when cornered. Now ask whether that grandmaster truly understands chess, or whether they have merely mastered a supremely complex pattern-matching exercise. This is, at its philosophical core, the debate that is quietly fracturing the community of researchers watching Tesla's Optimus program advance at a pace that seems to outrun the theory designed to explain it.

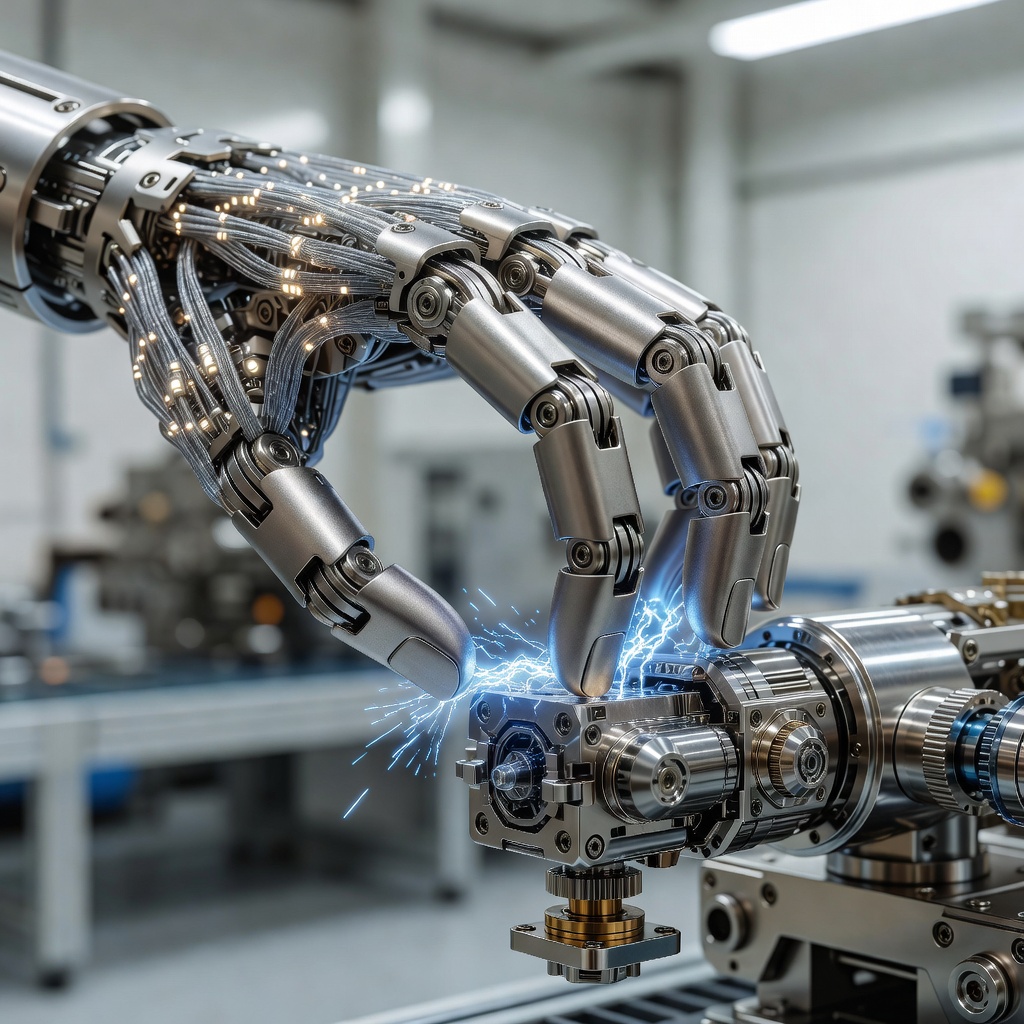

Tesla's humanoid robot effort has moved from an awkward stage-prop debut in 2021 to a machine that can sort battery cells, navigate dynamic factory floors, and demonstrate what the company calls "dexterous manipulation" with a fluency that would have seemed implausible just three years ago. Elon Musk has publicly projected Optimus production in the millions within the coming years, and Tesla's AI team is investing heavily in what the industry now clusters under the banner of "physical AI" - systems that do not merely process language or classify images but act inside the three-dimensional, unpredictably messy physical world. The ambition is enormous. The consensus on whether it can work the way Tesla envisions it? Fractured, contentious, and genuinely fascinating.

The Embodiment Hypothesis: A Field Divided

For decades, a strand of cognitive science called embodied cognition has argued that intelligence is inseparable from having a body. Thinkers from Francisco Varela to Andy Clark built careers on the premise that the brain does not operate like a central computer issuing commands to passive limbs. Instead, cognition is distributed, relational, and deeply entangled with sensory-motor feedback loops. A hand that grasps an object is not merely executing a brain's instruction; it is participating in an act of knowing. From this vantage point, Tesla's bet on humanoid robots looks almost philosophically correct. If you want an AI that can function in a world built for humans, perhaps you need a machine shaped like one, moving like one, experiencing friction, weight, and gravity the way one does.

But a competing school of thought, anchored more firmly in computational neuroscience and reinforcement learning theory, pushes back hard. These researchers argue that the shape of the vessel matters far less than the architecture of the learning system inside it. What Tesla is building, in this view, is an extraordinarily capable behavior-cloning engine wrapped in a humanoid chassis. Optimus watches human workers perform tasks, ingests that data through video and sensor streams, and learns to reproduce the behavior. It is impressive. It is useful. Whether it constitutes anything resembling genuine understanding of the physical world, however, is a question these skeptics answer with a firm and uncomfortable no.

The Data Problem at the Heart of Dexterity

Tesla's approach to training Optimus leans heavily on the same data-at-scale philosophy that powered its Full Self-Driving program. The theory is elegant: expose the system to enough varied real-world physical interactions, and generalizable competence will emerge. The company has spoken about using its human workforce essentially as a live demonstration dataset, capturing manipulation tasks across its manufacturing operations and feeding that back into the robot's neural networks. It is, in miniature, the same bet that large language models made about text: scale cures brittleness.

Researchers who specialize in robotic manipulation are not uniformly convinced. The fundamental challenge is what engineers call the "long tail" of physical reality. Language, for all its complexity, is a finite combinatorial space. Physical interaction is not. The exact angle at which a bolt is misaligned, the slight tackiness of a surface on a humid day, the way a cardboard box deforms differently depending on what is packed inside: these are not edge cases. They are the substance of the world. A system trained on millions of manipulation episodes can still fail catastrophically when confronted with a situation that falls outside the implicit distribution of its training data. The failure mode is not gradual degradation. It is abrupt, often spectacular, and potentially dangerous in proximity to human workers.

Tesla's counter-argument, implicit in its engineering choices, is that the answer to brittleness is more data, faster iteration, and tighter sim-to-real transfer pipelines. The company's simulation infrastructure allows it to generate synthetic training scenarios at a volume no physical demonstration dataset could match. Whether simulated physics is a faithful enough proxy for the genuine article to close the generalization gap remains one of the most actively contested empirical questions in the field right now.

Grounding the Machine: What Does "Understanding" Even Require?

The philosophical dimension of this debate refuses to stay politely academic. At its center is what researchers call the "symbol grounding problem": the challenge of connecting abstract computational representations to the physical referents they are supposed to encode. When a large language model says the word "heavy," it has learned statistical relationships between that word and thousands of other words. It has never felt weight. When Optimus picks up a heavy object, its force-torque sensors register resistance and its motor controllers adjust. Is that grounding? Or is it merely a more sophisticated version of the same statistical relationship, now encoded in physical feedback rather than text co-occurrence?

Some researchers argue the distinction is practically meaningless. If the robot behaves as though it understands weight across a sufficiently diverse range of contexts, the philosophical question of whether something "truly" understands is an unfalsifiable distraction. Pragmatists in the field point to the history of AI progress and note that nearly every capability once considered to require genuine understanding has eventually yielded to scaled pattern matching. The goalposts have moved repeatedly, and perhaps they are moving again.

Others find this pragmatism troubling precisely in the context of physical AI. A language model that hallucinates a historical fact produces a wrong answer. A physical AI system that hallucinates a property of its environment, misjudging the structural integrity of a surface or the mass distribution of an object it has never encountered, produces a physical consequence. The stakes of getting the epistemology wrong are not abstract.

Where Tesla Sits in the Academic Crossfire

What makes Tesla's position unusual in this debate is that the company is not primarily a research institution publishing findings and inviting peer scrutiny. It is a manufacturer with production targets and investor expectations. This creates a distinctive dynamic: Tesla is generating more real-world physical AI deployment data than almost any academic lab could hope to accumulate, but it is not releasing that data into the scientific commons in any systematic way. The researchers debating the limits of physical AI are largely doing so without access to the empirical evidence that Tesla's operations are quietly generating at scale.

This opacity cuts both ways. It means critics cannot definitively falsify Tesla's approach. But it also means that when Tesla makes bold claims about Optimus capabilities, the scientific community has no principled way to verify them independently. Musk's prediction that humanoid robots will outnumber humans within decades exists in an empirical vacuum that neither supporters nor detractors can fill with hard data.

What is visible from the outside is suggestive rather than conclusive. Demonstrations of Optimus performing factory tasks have grown progressively more complex and less scripted over successive public showings. The robot's gait has become notably more fluid. Its recovery behaviors, the micro-adjustments it makes when an object slips or a step is uneven, have the qualitative character of learned robustness rather than brittle choreography. Whether that observed robustness generalizes to truly novel physical situations, nobody outside Tesla's walls can say with confidence.

The Productive Discomfort of an Unsettled Science

Perhaps the most honest framing of where physical AI stands in 2025 is this: it is a field where the engineering is outpacing the theory, the commercial deployment is outpacing the safety science, and the philosophical questions are not merely decorative but have direct implications for how these systems fail and how badly those failures matter.

Tesla is not the only actor in this space. Boston Dynamics, Figure AI, Agility Robotics, and a growing cohort of well-funded startups are all racing along parallel tracks. But Tesla occupies a peculiar position because of its manufacturing scale, its access to real-world deployment environments, and the singular intensity of public attention that surrounds anything Elon Musk touches. If Optimus succeeds in ways that change industrial labor at meaningful scale, the debate about whether it truly understands the physical world will become less a philosophical puzzle and more a policy emergency.

The scientists arguing about cognition and grounding and the hard problem of physical intelligence are not indulging an academic luxury. They are, whether Tesla's engineers fully appreciate it or not, doing the foundational work that will eventually determine whether physical AI systems can be trusted, corrected, governed, and safely scaled. The factory floor does not care about epistemology. But the society that factory floor feeds into very much does.

"The question is not whether the robot can do the job. The question is whether it knows what it is doing. And those are not the same question."

Tesla's Optimus will almost certainly keep getting better by every measurable benchmark. It will handle more tasks, adapt to more environments, and eventually operate in spaces far beyond the factory. Whether it will ever cross the line from spectacular mimicry to genuine physical comprehension is a question that may not have a clean answer. But asking it rigorously, publicly, and without commercial pressure to look the other way might be the most important intellectual work happening anywhere in technology right now.