When the Robot Corrects Itself: Tesla's Optimus and the Quiet Breakthrough of Self-Supervised Physical AI

Picture a workbench in late 2024. A Tesla Optimus unit reaches for a cylindrical battery cell, misjudges the angle by roughly four degrees, and feels the object begin to roll. No alarm sounds. No engineer intervenes. The robot's own proprioceptive feedback loop fires, its fingers recalculate pressure distribution in under 80 milliseconds, and the grasp succeeds. What just happened is not a parlor trick. It is the earliest, clearest signal yet that physical AI, the discipline of teaching machines to understand the world through their own bodies, has crossed a threshold that researchers have been circling for a decade.

The Error Is the Lesson

Traditional industrial robots are programmed around error avoidance. Every joint angle, every grip force, every trajectory is choreographed in advance so that failure becomes a statistical rarity rather than a learning event. Tesla's approach with Optimus inverts this philosophy almost completely. Rather than eliminating the fumble, the engineering team has built a system that treats each fumble as a data point of extraordinary richness, capturing not just what went wrong but the exact sensorimotor context in which the error occurred and what correction strategy the model spontaneously generated to recover.

This is self-supervised learning applied to physical space, and it is considerably harder than it sounds. In language models, the training signal is cheap: predict the next token, compare to ground truth, adjust weights, repeat at planetary scale. In physical AI, the training signal costs time, actuator wear, compute, and occasionally a dropped component. Tesla's advantage, which Elon Musk has cited repeatedly in earnings calls and public statements, is that Optimus is being trained inside an environment that already generates enormous volumes of labeled real-world interaction data: the Tesla factory floor. Every battery pack assembled, every panel aligned, every bolt torqued represents a supervised episode that feeds back into the model's evolving understanding of how matter actually behaves.

What "Physical AI" Actually Means in 2025

The phrase gets thrown around with the looseness typical of any technology in its hype phase, so it is worth being precise. Physical AI refers to machine intelligence that does not merely process symbolic representations of the world but actively reasons through continuous interaction with physical reality. The robot cannot consult a lookup table for how a slightly damp surface changes friction coefficients. It must sense, model, predict, and act within a time window that is measured in milliseconds rather than the leisurely inference cycles affordable in cloud-based AI. Tesla's neural network architecture for Optimus reportedly borrows heavily from the vision transformer stack developed for Autopilot, repurposed to interpret spatial and tactile signals rather than road geometry. The conceptual bridge between driving a car and navigating a cluttered warehouse shelf turns out to be shorter than most outsiders expected.

Crucially, the system does not rely on high-definition maps of the environment. Unlike earlier generations of factory robots that required laser-measured workspace calibrations before they could do anything useful, Optimus is designed to walk into a novel setting, build a real-time spatial model, and begin operating productively within minutes. Tesla engineers have described this capability internally as "cold start competency," and it represents one of the harder unsolved problems in robotics. Most competing humanoid platforms still require significant environment preparation. Optimus, at least in controlled demonstrations, increasingly does not.

"The robot that can correct itself without being told it made a mistake is not just a better robot. It is a fundamentally different category of machine."

The Data Flywheel Nobody Is Talking About

Tesla's most underappreciated structural advantage in the humanoid race is not its actuator design or its neural network architecture. It is the sheer volume of embodied interaction data it can generate by deploying Optimus units inside its own manufacturing operations at scale. Musk has stated publicly that Tesla intends to deploy thousands of Optimus robots in its factories before the end of 2025, with the number eventually reaching into the tens of thousands. Each unit operating in a real production environment is simultaneously a product and a data-collection node, feeding observations back into a centralized training pipeline that continuously improves the shared model all units run on.

This creates a compounding effect that competitors without vertically integrated manufacturing operations simply cannot replicate at the same pace. Boston Dynamics builds impressive robots. Figure AI and Agility Robotics have demonstrated genuine capability. But none of them have a captive, high-stakes physical environment where dozens of robot-hours of nuanced manipulation data can be generated every single shift. Tesla does. And the implication is that the capability gap between Optimus and its nearest competitors is likely to widen over the next 18 months rather than narrow, because the training data advantage scales with deployment, and deployment is currently accelerating.

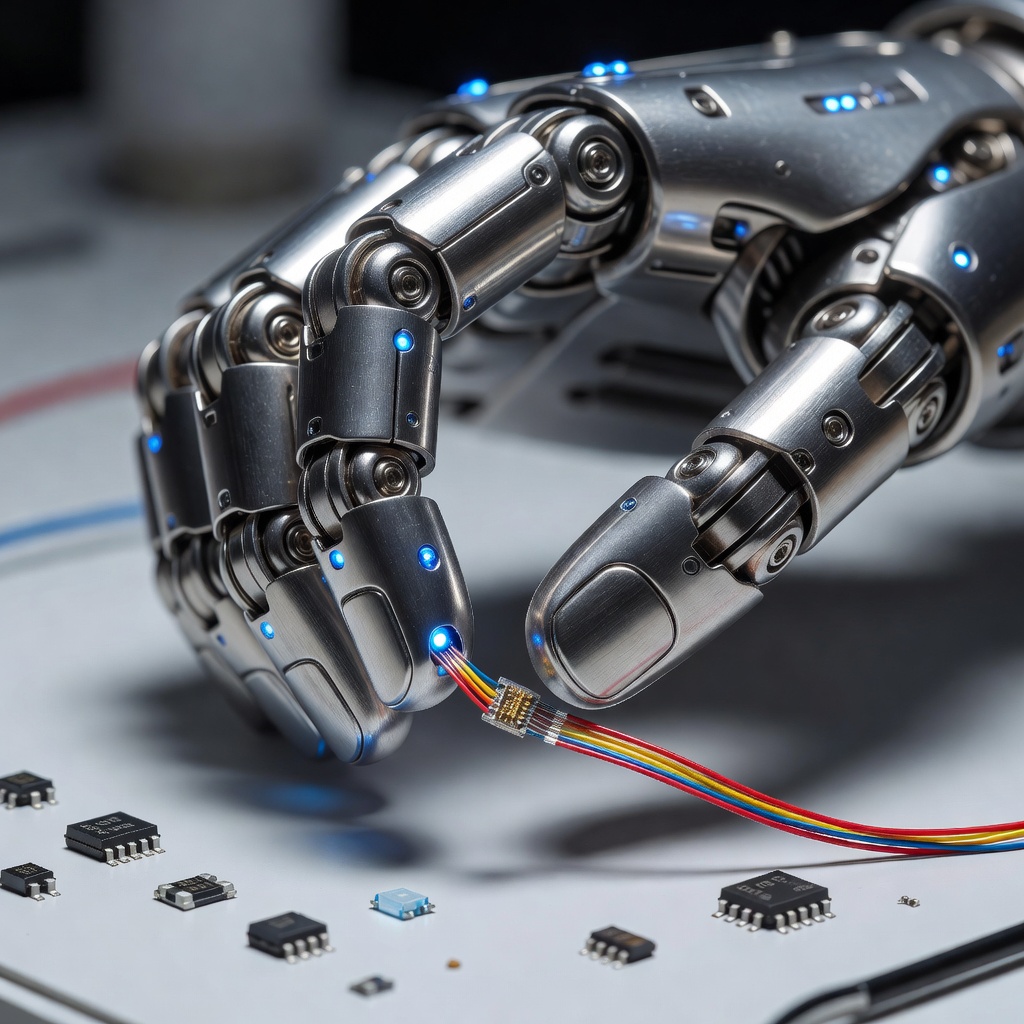

The Hands Problem: Still Unsolved, Rapidly Improving

If there is an honest vulnerability in the current Optimus program, it lives at the fingertips. Dexterous manipulation, defined as the ability to handle objects of varying size, weight, compliance, and surface texture with the fluid adaptability of a human hand, remains the most punishing benchmark in all of physical AI. The human hand contains 27 bones, 29 joints, and more than 120 ligaments. It is served by three distinct nerve pathways providing overlapping sensory coverage. Replicating even a fraction of this in a machine that must be manufactured at consumer-electronics cost is an engineering problem of considerable cruelty. Tesla's current Optimus hand design uses 11 degrees of freedom and integrates force sensors at each fingertip. For tasks like folding fabric or inserting a ribbon cable into a connector, performance has improved measurably across each hardware revision. But it still falls short of human capability in scenarios requiring rapid grip strategy switches, as when picking up a bag of irregular objects where the center of mass shifts unpredictably during the lift.

The AI side of the hands problem is if anything harder than the mechanical side. Teaching a model what a delicate object feels like before it breaks requires either enormous quantities of failure data (expensive and wasteful) or highly accurate simulation environments that transfer credibly to physical reality. Tesla has invested heavily in simulation, building what engineers refer to as a "physics-accurate digital twin" environment where Optimus can practice manipulation tasks at accelerated time scales before attempting them on the factory floor. The sim-to-real transfer gap, a chronic problem in robotics research, is narrowing with each generation of both the simulator and the physical hardware. Whether it narrows fast enough to meet Musk's ambitious production timelines is the question the industry is watching most closely.

Beyond the Factory: The Larger Stakes

Musk has been candid, perhaps startlingly so, about his belief that Optimus represents a larger economic transformation than any other product Tesla or SpaceX has ever built. His framing is straightforward: if a humanoid robot can perform the full range of physical labor that humans currently perform, the constraint on economic output imposed by the finite size of the human labor force effectively dissolves. The implications cascade from there into territory that economists, ethicists, and policymakers are only beginning to map seriously.

For the tech-builder community specifically, the near-term opportunity is more concrete. Tesla has signaled interest in eventually making Optimus available for commercial deployment outside its own facilities, which would place a general-purpose physical AI platform in the hands of researchers, manufacturers, and developers who have never had access to anything remotely comparable. The software stack, if opened to any degree, would immediately become one of the most important development platforms in the industry. Think of it as the physical-world equivalent of cloud compute: infrastructure that lets builders focus on application logic rather than the brutal low-level engineering of making a robot stand up and not drop things.

The Correction Loop as Competitive Moat

Return for a moment to that workbench scenario. The robot caught its own mistake. It did not need a label, a reward signal, or a human annotation to know that a rolling battery cell was a problem requiring a solution. It generated the correction from its own sensorimotor model of what a successful grasp feels like. This capacity, sometimes called intrinsic error supervision in the academic literature, is what separates a sophisticated automation tool from something that might reasonably be called an intelligent physical agent.

Tesla is not the only organization pursuing this capability, but it may be the only one with the combination of hardware scale, proprietary training data, and financial runway to push it past the research prototype stage and into the kind of mass deployment that generates the feedback loops necessary for real-world robustness. The robots will get better because they are working. They are working because Tesla is building them at scale. And they are being built at scale because each generation proves, in the only laboratory that ultimately matters, that a machine can reach for the world, miss slightly, and teach itself not to miss again.